Co-browse Architecture

Important

Starting in 9.0.005.15, Cassandra support is deprecated in Genesys Co-browse and Redis is the default database for new customers. Support for Cassandra will be discontinued in a later release.Architecture Diagram

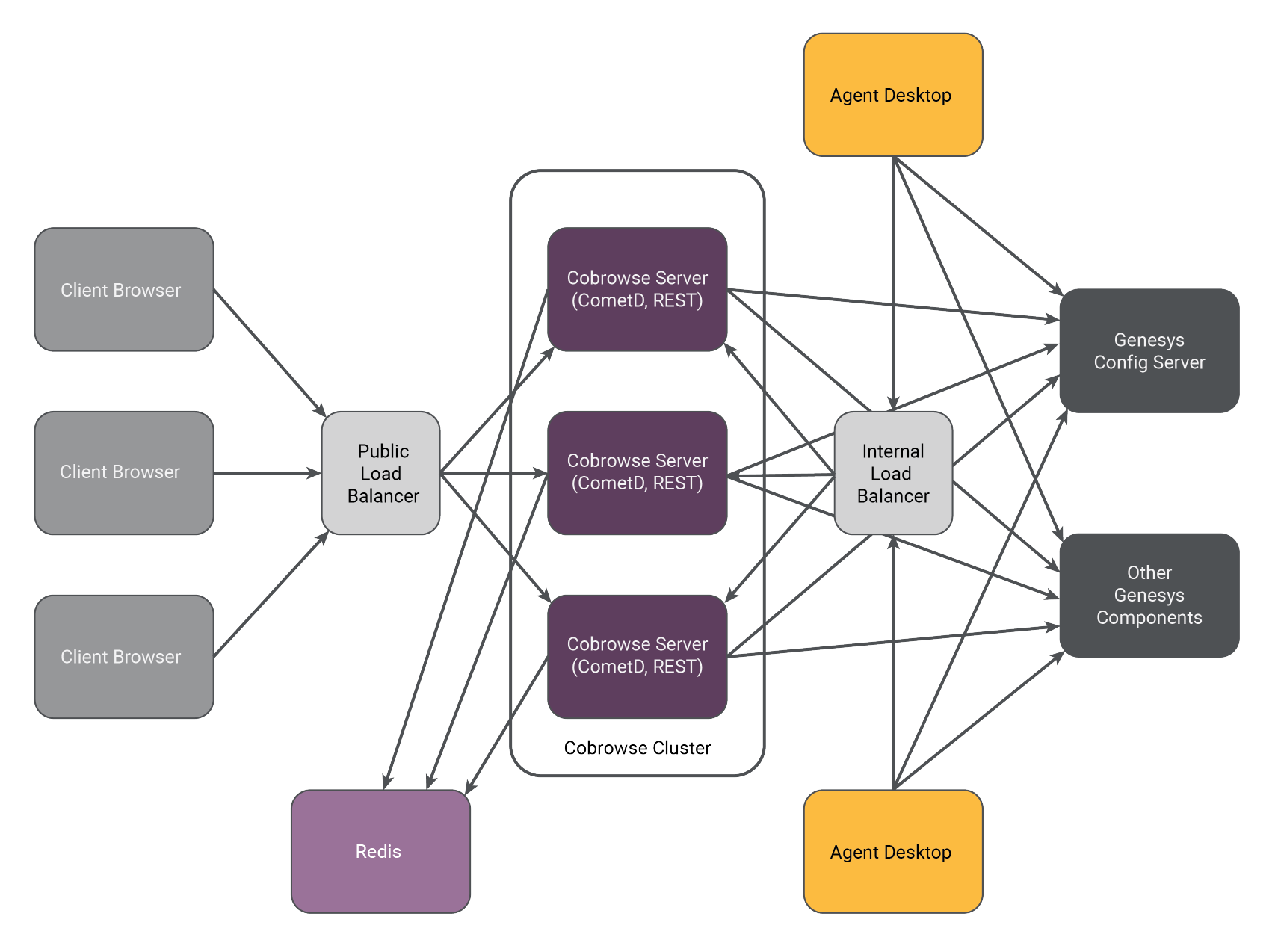

The following diagram shows an example of a three node cluster implementation of Co-browse:

- Each Co-browse server has the same role in the cluster and must be identically configured.

- Each Co-browse server hosts the following:

- CometD server with Co-browse

- Live Session API

- A Co-browse cluster is formed through a load balance/reverse proxy. See Cluster Configuration.

- Co-browse servers are usually deployed in the back end server environment and given access through a load balancer/reverse proxy.

- Internal Co-browse server resources are secured at the network level by not being exposed via the Public Load Balancer. Co-browse Server resources are exposed to internal applications via the Internal Load Balancer.

- Agent Desktops connect to the Co-browser server to receive web page representations from the Client Browser.

- The Co-browse plugin for Genesys Workspace Desktop Edition (Agent Desktop) reports Co-browse statistics via attached data on primary interactions.

- The Client Browser initiates a Co-browse session and transmits web page content to the Agent Desktop through the Co-browse Server.

This page was last edited on June 25, 2019, at 18:16.

Comments or questions about this documentation? Contact us for support!